What is OpenAI?

OpenAI is a pioneering AI research lab known for developing advanced generative AI technologies, such as ChatGPT and DALL-E 2, with the mission to steer AI development for the greater good of humanity. Established in 2015 as a non-profit, it transitioned to a mixed model to further its research while offering an array of products aimed at automating tasks and enhancing efficiency across various sectors. While OpenAI’s innovations like ChatGPT have revolutionized user interaction with AI, providing valuable tools for text and image generation, the company also navigates ethical challenges, including bias and the potential for misuse of AI, highlighting the need for responsible AI development and use.

What is Azure OpenAI?

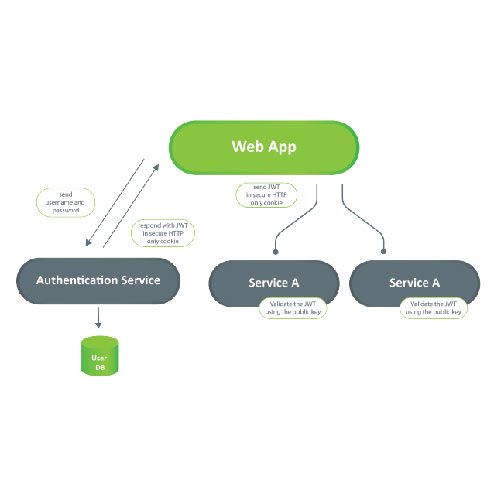

Azure OpenAI, offered by Microsoft, empowers developers and businesses to seamlessly integrate OpenAI’s advanced language and computer vision models into cloud applications. This service, known for powering apps like GitHub Copilot and Microsoft Designer, provides access to high-performance AI models on a scalable, reliable platform with industry-leading uptime. Azure OpenAI supports various development languages through REST APIs and SDKs and emphasizes responsible AI with features like content filtering. It’s designed for creating applications that understand and respond in natural human language, revolutionizing how we interact with technology by leveraging generative AI models trained on extensive datasets.

What is RAG?

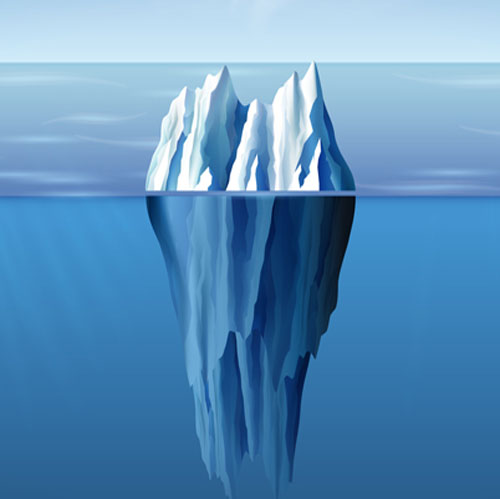

Retrieval-augmented generation (RAG) is an AI framework designed to enhance large language models (LLMs) like GPT by retrieving data from external sources. This process improves response accuracy and ensures current information, addressing LLM limitations such as outdated knowledge and AI hallucinations. RAG enriches LLMs by integrating relevant external information into responses, promoting user trust through transparency, and reducing computational costs. It's versatile, supporting applications from chatbots to text summarization, and simplifies training by leveraging external data sources.

Azure OpenAI + RAG

Azure OpenAI + RAG is a powerful combination that leverages Azure AI Search and the Retrieval-Augmented Generation (RAG) concept. This custom RAG solution integrates Azure AI Search to find relevant information based on user questions or requests. The top-ranked search results are then sent to the Language Model (LLM) for further processing.

This solution aims to provide highly relevant results cost-effectively, making it suitable for production applications in this new era. By running OpenAI models on data directly, the need for extensive training is eliminated, allowing users to unlock the full potential of their data effortlessly. Also, Azure OpenAI prioritizes data privacy and security, offering end-to-end encryption and compliance with GDPR, HIPAA, and ISO 27001. It provides strict data control, monitoring, and no sharing of data, ensuring user data integrity, trust, and data security in the RAG architecture.

Use Case: Build Your Own Helper for Cooking!

Before we start, I want to mention two other services that will help us build our own helper: Azure Blob Storage and Azure AI Search.

Azure Blob Storage is a cloud service offered by Azure for storing unstructured data, such as images, videos, PDFs, and other types of files. We will use this storage to store our documents.

Azure AI Search helps you quickly find what you are looking for in your data, using AI to make sense of text, images and more. It is like having a smart search engine for your own files. We will use this service to be able to easily search for the needed text in our files.

Now that we know the main ingredients we will use and know the potential services, let’s start with our example.

First, we need to gather the relevant documents for our helper. In this example, we’ll explore traditional dishes from Macedonia. I discovered a PDF file that perfectly serves our purpose.

Upon reviewing the file, I found it packed with recipes for traditional Macedonian cuisine, complete with necessary ingredients and preparation methods.

With our source material in hand, let’s move on to Azure Blob Storage to set up a storage account.

Once the storage account is created, open it, and within the ‘Containers’ section, create a new container to upload the documents.

The next step involves integrating our documents with Azure AI Search. To do this, we create an AI search service within Azure. After its creation, we navigate to it and initiate the data import process. We select Azure Blob Storage as our data source and pinpoint the previously created container.

This creation and data-importing process may take a few minutes, so patience is vital before creating the chatbot.

Now, we turn our attention to Azure OpenAI. If you haven’t yet created the service, do so now. Following its setup, access the Azure OpenAI studio — a workspace for interacting with and deploying models. Within the studio, find ‘Deployments’ under Management and deploy the model that suits your needs. For this project, I’ll use gpt-3.5-turbo, which is more than capable of performing our tasks.

Once the model is deployed, the final step is to link our GPT model with our data. To do this, navigate to ‘Chat’ under Playground and integrate our Azure AI search index as a data source.

With everything in place, our cooking helper is ready for action. Below are some screenshots showcasing the interactions with our helper.

An important highlight of this model, setting it apart from solutions like ChatGPT, is its focused response mechanism. It’s designed to address queries strictly based on the documents it has been trained on, significantly reducing the chances of generating misleading or incorrect answers. Should a question arise that falls outside its material’s scope, the model straightforwardly admits to not knowing the answer rather than attempting an inaccurate response. This specificity ensures reliability and accuracy in the information provided.

Now, this won’t make you an expert chef, that’s for sure, but it still shows that with very little effort, you can build something that can help you save time and make you better at what you do. That said, it does not have to be cooking. You can pick any domain and implement something that can be on the tip of your fingers. I also want to mention that this comes with some cost because some of these services are paid for, so be aware if you want to make this a production application from which you can benefit.

Author

Martin Trajkov

Software Engineer