FuryGPU, a Custom Xilinx FPGA GPU, Runs Old Games on New Computers

FuryGPU, a Custom Xilinx FPGA GPU, Runs Old Games on New Computers

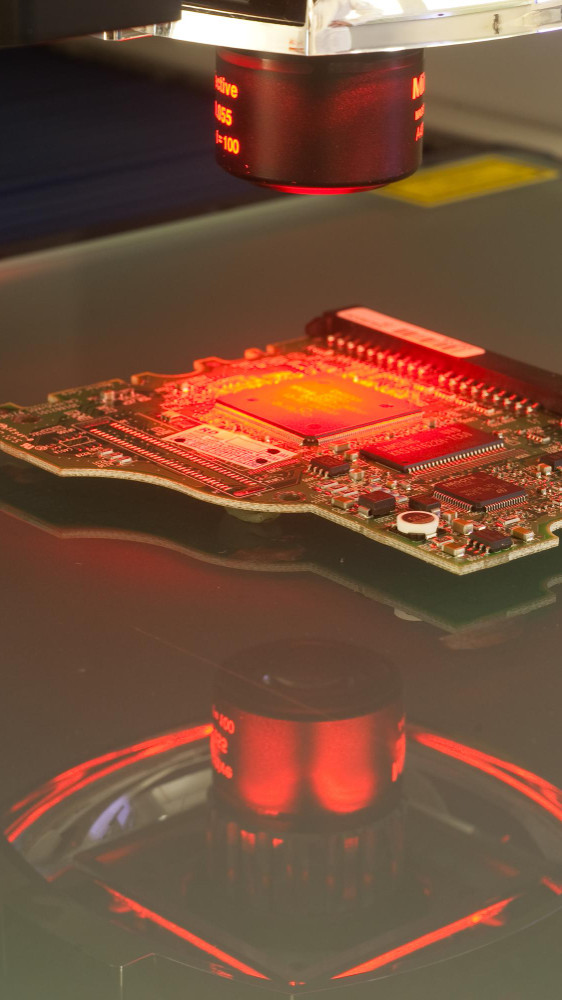

Looking into retro gaming often means navigating compatibility issues, particularly when it comes to classic PC titles. Despite the staggering power of modern GPUs, many struggle to accurately emulate games from the '90s. Traditionally, gamers have resorted to emulation software or piecing together vintage hardware setups. However, Dylan Barrie has spent the last four years developing an innovative solution to revolutionize the retro gaming experience. The solution is now known as FuryGPU.

How FuryGPU Brings Retro Games Back to Life

FuryGPU represents a breakthrough in gaming hardware, offering a PCIe graphics card custom-tailored for modern Windows PCs that exhibits hardware capabilities reminiscent of mid-1990s GPUs.

In a world where original components from the previous decades are no longer in production, Dylan Barrie approached the challenge with creativity and ingenuity. Rather than attempting to replicate outdated designs, he opted for a forward-looking solution: the AMD Zynq UltraScale+ FPGA. With the help of this advanced technology, Dylan went on a self-taught journey, mastering SystemVerilog to program the FPGA. He crafted a customized GPU architecture and engineered a custom PCIe card circuit board from scratch. Not stopping there, he meticulously crafted Windows drivers to seamlessly integrate the FuryGPU with modern operating systems.

Equipped with a four-lane PCIe connector and offering digital video output via DisplayPort and HDMI, FuryGPU delivers a gaming experience that effortlessly balances nostalgia with performance. Initial tests highlight impressive results, with titles like Quake running consistently at 60 frames per second, proof of FuryGPU’s capabilities. And while Doom’s performance has not been officially benchmarked, expectations run high for its smooth execution on this innovative hardware.

FuryGPU is not just a graphics card; it is a testament to the intersection of technology and passion, breathing new life into beloved classics while pushing the boundaries of what is possible in retro gaming. With FuryGPU, the past meets the future, offering gamers a bridge between nostalgia and modernity unlike anything seen before.

Our Expert Opinion

The arrival of FuryGPU marks the beginning of a new era for retro gaming enthusiasts, creating a thrilling fusion of vintage nostalgia and modern technology. Designed to seamlessly integrate with contemporary Windows PCs while faithfully resurrecting games from the mid-1990s, FuryGPU stands as a crucial point of innovation in the gaming landscape. Its significance lies not only in its ability to bridge generational divides but also in its preservation of gaming history, ensuring that classic titles endure for future generations to savor.

One of FuryGPU's most compelling features is its capacity to deliver smooth, immersive gameplay experiences for beloved classics, even on the latest Windows operating systems. By harnessing the power of custom GPU technology, users can bid farewell to the frustrations of emulation and the complexities of assembling era-specific hardware setups. Yet, the journey to reach FuryGPU's full potential was not without its challenges. Crafting custom-tailored Windows drivers was a formidable obstacle, testing the limits of the creator's expertise in graphics rendering. Moreover, convincing users to embrace a specialized GPU tailored specifically for retro gaming presents its own set of challenges, requiring a delicate balance of education and advocacy within the gaming community.

FuryGPU’s revolutionary design transcends mere emulation or replication of ’90s hardware. Using the power of advanced FPGA technology, FuryGPU sidesteps the limitations of outdated integrated circuits, offering a unique solution that combines compatibility with performance. Moreover, its open-source nature not only empowers community collaboration but also serves as a valuable resource for newcomers to FPGA technology, inspiring creators to explore the realm of custom hardware ICs with newfound freedom and creativity.

In a Nutshell

In conclusion, the creation of FuryGPU has the power to change the future of retro gaming completely. However, its enduring impact depends upon widespread acceptance and ongoing development efforts. As enthusiasts and innovators come together to support this groundbreaking technology, the horizon of possibilities for retro gaming continues to expand, building towards a future where the cherished classics of yesteryear remain as vibrant and accessible as ever.

Safeguarding Your Digital Front: Embracing Cybersecurity with NIS2 Directive

Safeguarding Your Digital Front: Embracing Cybersecurity with NIS2 Directive

Nowadays more than ever, the importance of cybersecurity cannot be overstated. As we navigate the vast expanses of the internet, our digital footprints leave trails vulnerable to exploitation by cyber threats. It's not a question of if, but when, a breach may occur. That's why it's crucial for organizations to adopt a proactive stance, embracing the mindset of assuming a breach while taking decisive measures to protect their valuable assets and sensitive data. Waiting for an attack to happen before responding is no longer an option in the face of relentless cyber threats. We must be prepared, fortifying our defenses, and actively seeking out vulnerabilities before they can be exploited.

Navigating the webinar TC Gill had the opportunity to expose the expertise of our guests on the webinar. Joining the conversation, experts like Senad Aruc and Goran Chamurovski highlight the importance of red and blue teams in cybersecurity. By understanding threats from both offensive and defensive perspectives, organizations can better fortify their defenses and anticipate potential vulnerabilities.

With the introduction of the NIS2 Directive, regulatory frameworks are evolving to address the growing threat landscape. This directive emphasizes the importance of cybersecurity risk management and incident response, urging organizations to conduct thorough risk assessments and develop comprehensive risk treatment plans tailored to their unique risk profiles.

Real-time adaptive risk posture tools emerge as essential allies in the battle against cyber threats. By continuously monitoring assets, detecting vulnerabilities, and correlating threat intelligence, organizations can maintain an up-to-date understanding of their risk posture and respond swiftly to emerging threats.

The discussion got to the touchpoint of security operations centers (SOCs) and their vital role in cybersecurity defense. To effectively combat sophisticated adversaries, SOCs must recruit individuals with specialized skills and leverage advanced technologies such as AI and machine learning. By adhering to the 5V principles of big data, SOCs can analyze vast amounts of data efficiently, identifying potential threats and vulnerabilities in real-time.

Furthermore, the design of network and application architecture should prioritize security from the outset. By following secure development lifecycles, organizations can integrate security measures seamlessly, minimizing vulnerabilities and strengthening overall resilience.

In conclusion, human-centric cybersecurity is essential for safeguarding our digital frontier. By embracing proactive measures, leveraging advanced technologies, and adhering to regulatory directives like NIS2, organizations can stay ahead of evolving cyber threats and protect what matters most.

For more details of the fruitful discussion our speakers and host had on the topics above, watch the video below.

Are you unsure about how the NIS2 Directive will impact your business’s cybersecurity practices? Don’t worry because we have your back! At IT Labs, we understand the importance of staying ahead of the regulations and protecting your digital assets. That’s why we offer both free consultation and comprehensive training by our cybersecurity experts. Don’t wait till last minute, start your NIS2 journey today!

A Recap of Our Experience at BEST Conference Day on Leadership in STEM

A Recap of Our Experience at BEST Conference Day on Leadership in STEM

Our recent presence at the BEST Conference Day on Leadership in STEM at the School of Electrical Engineering in Belgrade was nothing short of remarkable. Kostandina Zafirovska, our General Manager, was a keynote speaker at the event, and she discussed the development of personal skills for STEM leadership. Meanwhile, Isidora Babovic, our People Engagement Specialist, held an engaging soft skills workshop that helped students gain the interpersonal tools needed to thrive in the industry.

Engaging with the event atendees was an exhilarating experience, with lively discussions that showcased the passion and curiosity of the next generation of STEM leaders. The event’s organization was astonishing, from seamless logistics to the thoughtful curation of speakers and workshops, demonstrating the school’s commitment to excellence and its reputation as a hub for cutting-edge research and education in the field.

Our workshop focused on the essential aspects of professional growth and effective communication, topics that resonate deeply within the STEM community. We emphasized the importance of developing not just technical skills but also soft skills such as leadership, teamwork, and collaboration, which are crucial for success in STEM careers. It was heartening to witness the enthusiasm and active participation from everyone involved, with attendees eagerly engaging with us, participating in hands-on activities, and networking with peers throughout the day.

As the day unfolded, it became evident that each participant used this opportunity to learn something new and build meaningful connections and insights. The collaborative and inclusive nature of the event fostered a sense of community and encouraged attendees to support and learn from one another.

The feedback we received from students was overwhelmingly positive, highlighting the value of such gatherings in inspiring and empowering the next generation of STEM leaders. It was truly a memorable experience for all.

Intel’s Stand-Alone FPGA Company’s Name Revived as Intel Altera

Intel's FPGA Business Revives as Altera: A Strategic Spin-Off Unveiled

In a pivotal move for the semiconductor industry, Intel has breathed new life into its FPGA business by rebranding it as Altera. This development comes just two months after Intel spun off its Programmable Solutions Group into a stand-alone company, marking a significant shift in its strategic direction. With Altera rebranding, Intel signals a renewed focus on the FPGA market and its potential for growth and innovation.

A Return to Roots: The Altera Rebranding

The decision to revert to the original Altera name underscores Intel's commitment to revitalizing its FPGA business and positioning it for future growth. With plans underway to attract private investment, Intel aims to pave the way for Altera's potential return to the public market by 2026. Embracing its heritage, Altera aims to build upon its legacy of innovation while forging new pathways in the FPGA landscape.

Leadership for a New Era: Meet the Team

Leading the charge at Altera are seasoned Intel veterans Sandra Rivera, appointed as CEO, and Shannon Poulin, serving as COO. Their extensive experience within Intel augurs well for Altera's prospects as it embarks on this new chapter as an independent entity. With their leadership, Altera is poised to navigate the complexities of the semiconductor industry while driving forward with bold and strategic initiatives.

Strategic Imperatives: Driving Growth and Expansion

The spin-off of Altera serves a dual purpose for Intel. Not only does it provide the company with additional liquidity to fuel CEO Pat Gelsinger's ambitious comeback plan, but it also unlocks new avenues for business expansion. By targeting markets such as industrial, automotive, and aerospace and defense, Altera aims to diversify its revenue streams and capitalize on previously untapped opportunities. Through strategic partnerships and innovative solutions, Altera seeks to position itself as a key player in emerging markets and industries.

Product Focus: Innovating for Tomorrow's Needs

Crucially, the move positions Altera to focus on developing low-end and midrange FPGA products, addressing concerns around affordability and accessibility. This strategic shift is poised to democratize the use of FPGAs, empowering more companies to leverage their capabilities in system development. Altera's product lineup, including the Agilex 9 FPGA boasting industry-leading data converters, underscores its commitment to innovation and meeting the evolving needs of customers. With a relentless focus on R&D,

Altera is poised to deliver cutting-edge solutions that push the boundaries of what is possible in the FPGA market.

Expert Analysis: Assessing the Potential

Expert analysis underscores the potential benefits of Intel's strategic spin-off of Altera. By unlocking new growth opportunities and leveraging experienced leadership, the move sets the stage for both entities to thrive independently. However, success will hinge on factors such as market response and the effective execution of planned initiatives. As Altera charts its course as an independent entity, it must remain agile and adaptable in the face of evolving market dynamics and technological advancements.

Looking Ahead: Towards a New Era of Innovation

Looking ahead, the separation of Altera from Intel could pave the way for increased private investment and potentially influence similar strategic moves within the semiconductor industry. As Altera charts its course as an independent company, the stage is set for a new era of innovation and growth in the FPGA market. With a clear vision and strategic roadmap in place, Altera is poised to capitalize on emerging opportunities and cement its position as a leader in the field of programmable solutions.

Exciting Internship Opportunities at IT Labs – Apply Now!

Exciting Internship Opportunities at IT Labs

Are you a tech enthusiast looking to gain practical experience in the field of data engineering and AI? Do you want to be part of a dynamic team that will help you develop both tech and soft skills? If so, we have an amazing opportunity just for you!

We’re thrilled to announce that our popular 3-month internship program is now open for applications. This is your chance to work alongside our expert engineers, learn cutting-edge technologies and gain hands-on experience on real-world projects. The best part? Top-performing interns will have the opportunity to transition into full-time roles upon completion of the program.

About the Internship

Our internship program is designed to provide comprehensive learning experience within three months. You’ll work closely with dedicated mentors who will guide you through data engineering and machine learning/AI complexities. You will also gain first-hand experience in the dynamic field of technology, equipping you with the tools and skills needed to launch a thriving tech career.

What to Expect

Data Engineer

Gain a solid foundation in Data Engineering by learning how to design, build, and maintain efficient data pipelines that can handle large volumes of data. Master key technologies such as SQL and Python and become proficient with cloud platforms like AWS. These skills will empower you to extract valuable insights from complex datasets, streamlining the process of turning raw information into actionable knowledge.

ML/AI Engineer

Master the foundations of machine learning by exploring how to build intelligent systems that can learn from data and make predictions or decisions without being explicitly programmed to do so. Work on projects involving natural language processing, computer vision, and more, applying these powerful techniques to real-world problems. By the end of the program, you'll be a well-rounded AI engineer ready to drive innovation in your field.

Mentors

Martin Trajkov

Software Engineer

Senior Software Engineer at IT Labs, with 5+ years of experience transitioning from Java to the world of AI and Machine Learning. He has significantly contributed to enhancing products through implementation and integration of AI tools. Committed to continuous growth, Martin’s expertise spans AI, cloud computing, and software engineering.

Mario Liptov

Python ML Engineer

Python ML Engineer at IT Labs with 2+ years of experience in Machine Learning, Data Engineering, Data Science and Software Development. He specializes in Python and has experience in Azure and AWS. Mario has a passion for problem-solving and a strong desire to keep learning new technologies.

Who We're Looking For

We welcome applications from passionate techies and recent graduates who:

- Have a strong foundation in computer science or a related field.

- Demonstrate a keen interest in data engineering or AI.

- Eager to learn, adapt, and take on new challenges.

Don’t miss this incredible opportunity to kickstart your career in Data Engineering or Machine Learning/AI Engineering.

AMD Expands FPGA Line with Spartan UltraScale+: A New Era for Edge Solutions

AMD Expands FPGA Line with Spartan UltraScale+: A New Era for Edge Solutions

Development and innovation in the world of IT infrastructure is really kicking into gear, and the edge has emerged as a critical measure for success. AMD appears to be at the forefront of this revolution, extending its FPGA family with the Spartan UltraScale+, a series designed to meet the growing demands of edge computing. This new addition targets IoT devices, which, despite their size, are playing an increasingly significant role in shaping business operations and IT strategies.

The Edge - A Crucial Battleground for Chip Makers

Rob Bauer, AMD's Senior Manager of Product Marketing, highlights the multifaceted challenges and opportunities at the edge. The burgeoning volume of data necessitates advancements in security, privacy, developer accessibility, and the longevity of chip designs. Amidst shrinking product lifecycles, especially in consumer technology, these "table stakes" drive the evolution of processors destined for edge applications.

AMD's Answer to the Edge Computing Challenge

The Spartan UltraScale+ family, the sixth generation in AMD's Spartan series, marks a strategic push into edge computing spaces. Bauer emphasizes the silicon's edge-centric design philosophy, complemented by AMD's commitment to tool commonality. The Vivado Design Suite and Vitis Unified Software Platform support the new FPGAs, addressing a critical efficiency gap in the market, particularly against smaller FPGA vendors lacking comprehensive toolsets.

Technical Innovations and Advantages

Key to the Spartan UltraScale+ series is its power efficiency, achieved through a 16nm FinFET design, which promises up to a 30% reduction in power usage. This efficiency does not compromise performance, offering 1.9 times the fabric performance and enhanced I/O and memory bandwidth, essential for edge devices operating under stringent power constraints. Additionally, AMD has fortified the Spartan UltraScale+ with robust security features, including NIST-approved post-quantum cryptography algorithms, acknowledging the critical nature of data security at the edge.

Looking Ahead: Availability and Impact

While the anticipation for the Spartan UltraScale+ is high, its availability remains on the horizon, with evaluation kits expected in the first half of 2025. This timeline poses a challenge in a fast-paced industry, where the speed of innovation is crucial.

Expert Perspective: Navigating the Future of Edge Computing with AMD

The collaboration between BMI and Intel, using Agilex FPGAs in ORAN radio units, is a forward-looking initiative that reflects deep industry insights and a commitment to innovation. As big players in the field, the fusion of ORAN's open architecture with the advanced capabilities of Agilex FPGAs is a strategic alignment that promises to accelerate the adoption of 5G technologies, reduce operational costs, and enhance network performance and sustainability.

This partnership is a beacon of innovation, showing how strategic alliances can lead to breakthroughs that shape the future of telecommunications.

However, the success of these new FPGAs will ultimately hinge on their real-world application and the ability to meet the evolving needs of developers and end-users. AMD’s strategic focus on edge computing, tool ecosystem, and performance optimization underscores a promising direction in FPGA development. It’s an exciting time in the field, and we eagerly anticipate the impact of these developments on the future of edge computing.

Advancing 5G with ORAN: Bluewaves Mobility and Intel's Agilex FPGAs

Advancing 5G with ORAN: Bluewaves Mobility and Intel's Agilex FPGAs

The partnership between Bluewaves Mobility Innovation (BMI), a visionary Ontario-based startup, and tech giant Intel is setting new standards for 5G connectivity. By embedding Intel's ultramodern Agilex FPGA products into its next-generation ORAN Radio Units, BMI is not just creating products; it's sculpting the future of Open Radio Access Networks (ORAN) and, by extension, the 5G ecosystem.

So, what does this mean? Our FPGA experts dived deep into the subject to see just how this can change the industry.

The Strategic Imperative of ORAN in 5G

ORAN is not merely a technological innovation, but a paradigm shift in how mobile networks are conceived, deployed, and managed. This shift towards a more open, disaggregated network architecture is crucial for telecom infrastructures' future scalability, flexibility, and sustainability. As an FPGA expert, it's clear that the choice of Intel's Agilex FPGAs by BMI underscores a strategic move towards using unparalleled computational speed, efficiency, and adaptability in radio frequency (RF) signal processing and digital signal processing (DSP) applications critical for ORAN success.

Intel's Agilex FPGAs: A Game-Changer for BMI's ORAN Solutions

Intel's Agilex FPGA series stands out for its advanced features, including its adaptability to changes in workload, high-performance computing capabilities, and energy efficiency. These features make Agilex an ideal choice for the demands of 5G ORAN—where high data throughput, low latency, and power efficiency are paramount. Furthermore, Agilex FPGAs support BMI's goal of reducing the overall TCO and enhancing the environmental sustainability of mobile networks. The flexibility and power efficiency of Agilex FPGAs allow for more dynamic network management and optimization, which are crucial to achieving these goals.

Cementing a North American ORAN Ecosystem

BMI's commitment to manufacturing in greater Toronto and partnering with a global leader like Intel signifies more than business strategy; it reflects a broader vision for setting up a robust, secure, and resilient ORAN supply chain in North America. This approach strengthens local economies and ensures a higher level of control and security over the technology that will underpin critical national infrastructure.

A Showcase of Collaborative Innovation at MWC Barcelona 2024

The upcoming demonstration of BMI and Intel's technology at the Mobile World Congress in Barcelona is more than an exhibition; it's a testament to the power of collaboration in pushing the boundaries of what's possible in 5G technology. Attendees will have a unique opportunity to see how Intel's Agilex FPGAs enable BMI's ORAN Radio Units to meet the complex demands of modern telecom networks.

Expert Conclusion: Shaping the Future of 5G Together

The collaboration between BMI and Intel, using Agilex FPGAs in ORAN radio units, is a forward-looking initiative that reflects deep industry insights and a commitment to innovation. As big players in the field, the fusion of ORAN's open architecture with the advanced capabilities of Agilex FPGAs is a strategic alignment that promises to accelerate the adoption of 5G technologies, reduce operational costs, and enhance network performance and sustainability. This partnership is a beacon of innovation, showing how strategic alliances can lead to breakthroughs that shape the future of telecommunications.

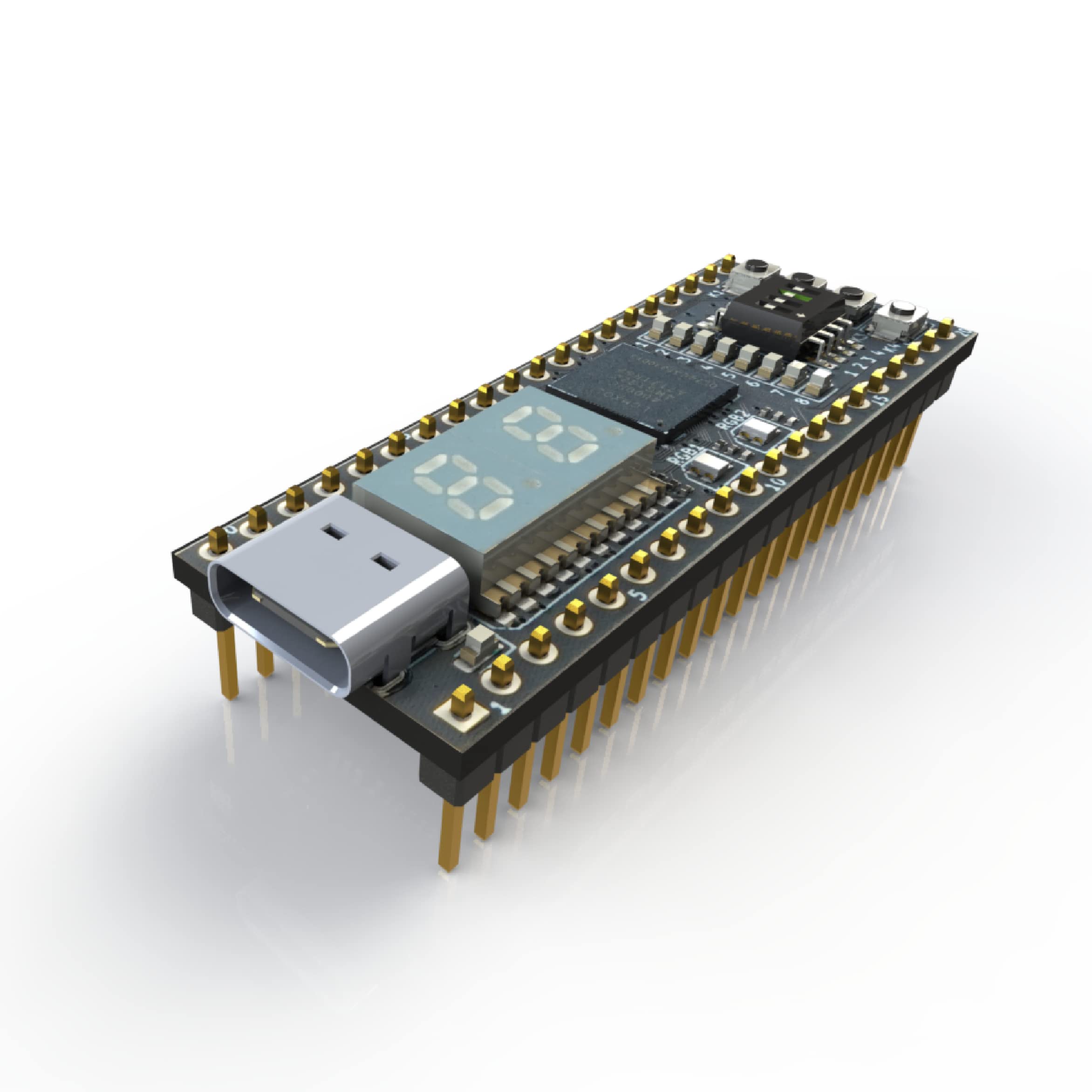

Microchip Launches PolarFire RISC-V and FPGA Development Board

Microchip Launches PolarFire RISC-V and FPGA Development Board

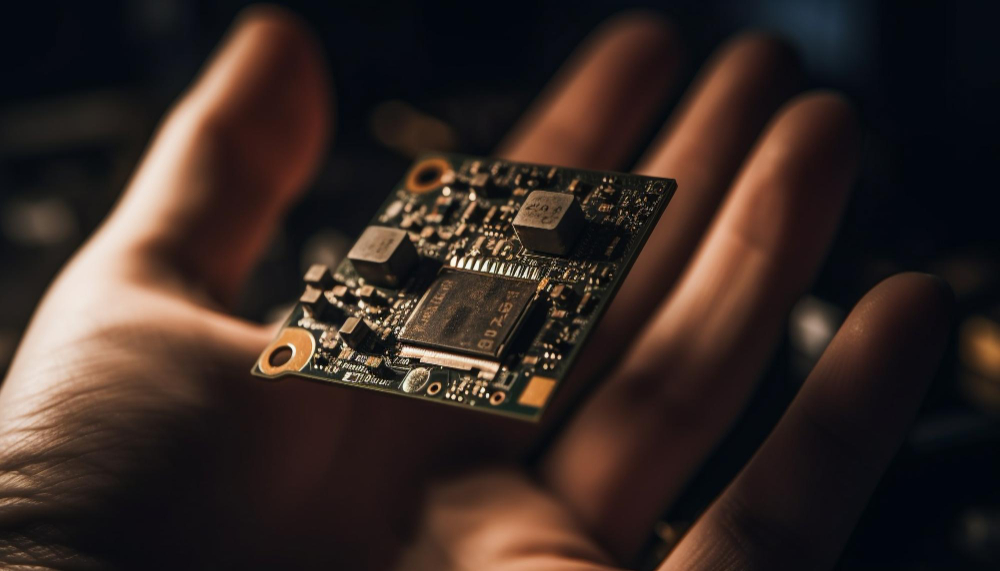

Microchip has unveiled the PolarFire SoC Discovery Kit, a groundbreaking development in the world of system design. This launch marks a significant step towards mainstream adoption of the RISC-V architecture, known for its open-source, well-supported ecosystem.

RISC-V: A Shift Towards Open-Source Architecture

The transition to RISC-V cores from proprietary instruction set architectures (ISAs) offers numerous benefits, including access to a rapidly expanding ecosystem. This move is particularly advantageous for embedded developers who have previously faced challenges due to limited hardware options for prototyping RISC-V designs.

PolarFire SoC Discovery Kit: Bridging the Gap

The PolarFire SoC Discovery Kit is designed to simplify access to RISC-V and FPGA hardware for engineers at all levels. From students just starting out to seasoned embedded engineers, this open-source development kit enhances the learning experience by combining the flexibility of FPGA with the efficiency of RISC-V cores.

Linux Meets RISC-V: A Powerful Combination

With solid Linux support, the PolarFire SoC allows users to explore new scenarios across a variety of applications. This includes everything from networking servers and data processing libraries to user interfaces for specialized apps running on the FPGA. The kit's programmable logic supports the integration of custom I/O, accelerators, video streaming IP, and multi-camera interfaces, all of which can be managed through dedicated Linux drivers.

Technical Specifications and Features

At the heart of the development board is the low-power PolarFire MPFS095T SoC FPGA, featuring 95K high-performance logic elements, 292 mathematical blocks, and four U54 RISC-V application cores with speeds up to 625MHz. The board is equipped with 1 GB of DDR4 memory, three UART ports, an RJ45 gigabit Ethernet port, and a microSD card slot for Linux booting. User interfaces include eight DIP switches, eight LEDs, and two push buttons, with expansion options such as a 40-pin Raspberry Pi connector, a 16-pin MikroE mikroBUS connector, and a MIPI video connector. The kit also supports a serial 7-segment 8-digit display via a 10-pin interface and is powered through a USB Type-C connector.

Empowering Innovators with Comprehensive Tools

Measuring just 10.4 cm x 8.3 cm, the PolarFire SoC Discovery Kit offers a comprehensive toolset for developers looking to explore the potential of RISC-V and FPGA technology. This kit not only facilitates the prototyping of RISC-V designs but also paves the way for innovative applications in a wide range of fields.

Conclusion: Pioneering the Future of System Design

Microchip's PolarFire SoC Discovery Kit is more than just a development board; it's a gateway to the future of system design. By making the RISC-V architecture more accessible to a wider audience and marrying it with the versatile power of FPGA, Microchip is not only addressing the needs of today's developers but also paving the way for the next generation of innovation. Whether for educational purposes, industry projects, or hobbyist experimentation, this kit represents a significant leap forward in the democratization of technology. With its robust support and expansive capabilities, the PolarFire SoC Discovery Kit stands ready to fuel the creative fires of engineers and developers around the globe, enabling them to bring their most ambitious projects to life.

Mercury's Groundbreaking Intel Agilex FPGA-Based System

Mercury's Groundbreaking Intel Agilex FPGA-Based System

Mercury has unveiled its latest innovation: the DRF2580 System-On-Module. This system, powered by the Intel Agilex 9 SoC FPGA, is a game-changer in direct RF signal processing, promising to redefine aerospace and defense systems with its broad radio frequency range, enhanced security, and accelerated decision-making capabilities.

The Power of Direct Digitization

Traditional analog signal down-conversion stages are now bypassed, thanks to the direct digitization of RF signals. This leap forward allows for real-time digital signal processing, high-speed converters, and expansive bandwidth digital data links. The outcome? A solution that's not only more power-efficient but also cost-effective.

Compact Design, Expansive Capabilities

Despite its small footprint, the DRF2580 is mighty. It features four channels and leverages 64GSPS ADCs and DACs, ensuring it's well-suited for a plethora of applications ranging from radar and communications to electronic warfare and SIGINT, among others. This versatility is critical in mission-critical fields where rapid, efficient data processing is paramount.

Transforming the RF Spectrum

Ken Hermanny, GM of Mercury’s Mixed Signal business, emphasizes the transformative impact of this technology: “These boards make direct digitization across the spectrum a reality. Previously, converter technology lagged, struggling to match the wide frequency range of RF input and output signals. Now, with higher conversion and processing rates, we can efficiently capture, process, and exploit larger portions of the RF spectrum at the edge.”

A New Era for Signal Processing

The launch of the DRF2580 System-On-Module marks a significant milestone in signal processing technology. By setting new benchmarks for power efficiency, cost-effectiveness, and rapid decision-making, Mercury not only addresses current technological gaps but also paves the way for future innovations in mission-critical applications.

Understanding FPGA becomes easier than ever with EIM Technology’s STEPFPGA-Based Electronics Education Bundle

Understanding FPGA becomes easier than ever with EIM Technology’s STEPFPGA-Based Electronics Education Bundle

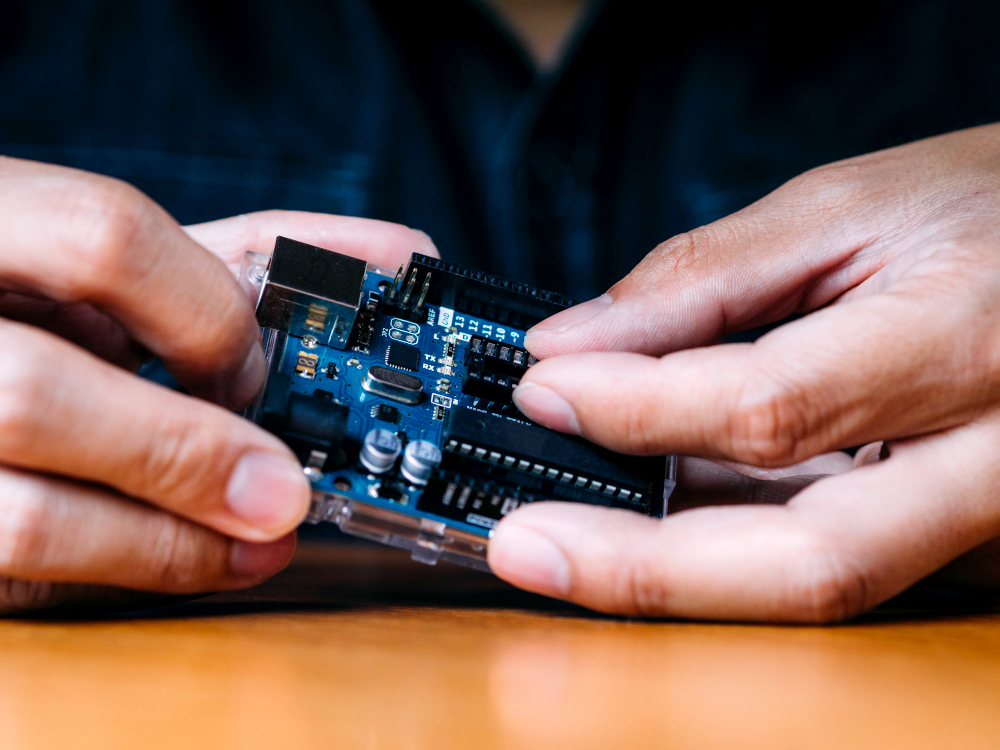

In 2022, EIM Technology initiated a transformative journey in FPGA education with a Kickstarter campaign that introduced an educational bundle designed to demystify digital design. Central to this bundle is the STEPFPGA board, a breadboard-friendly device built around the Lattice Semi MachXO2 MXO2-4000 FPGA, complemented by electronic components and an instructional guidebook. The enthusiastic reception from the public spurred EIM Technology to enhance this learning toolkit further.

The latest iteration of the bundle boasts an updated guidebook and an enriched browser-based Integrated Development Environment (IDE). This IDE now incorporates a simulation tool inspired by Altera’s Multisim, offering a significant advantage for learners to analyze, examine, and debug their designs in a virtual setting. This preemptive troubleshooting not only refines the learning experience but also ensures a higher caliber of design when transitioning to physical hardware.

In addition to the software upgrades, the hardware components have been reimagined to minimize soldering, shifting towards a more modular approach. This strategic decision allows learners to allocate more time to mastering the intricacies of digital design, rather than navigating the complexities of hardware assembly.

Anticipation is high as the updated hardware components are set to be dispatched following the closure of the Kickstarter campaign next month. The value proposition of this educational bundle is compelling, particularly for those new to the world of FPGAs and digital design. With the guidebook priced at approximately $44, the complete bundle at $125, and an upgrade option for previous bundle owners at around $88, EIM Technology is making advanced electronics education accessible and affordable. This initiative not only lowers the entry barrier to FPGA development but also equips learners with a comprehensive set of tools and resources to explore and create a myriad of digital designs.

Demystifying FPGA Development

As an FPGA expert, the move by EIM Technology to simplify and make more accessible the realm of FPGA development is both timely and commendable. FPGA technology, known for its flexibility and efficiency, plays a critical role in various applications, from consumer electronics to complex industrial systems. However, its steep learning curve has been a barrier for many. This educational bundle, with its intuitive guidebook and user-friendly IDE, significantly lowers that barrier, enabling enthusiasts and students alike to explore and innovate within the digital design space without the intimidation factor.

Shaping the Future of Electronics Education

The implications of such initiatives extend far beyond individual learners. By providing an affordable and accessible pathway to mastering FPGA technology, EIM Technology is contributing to a broader cultural shift in electronics education. This shift emphasizes practical, hands-on learning, where understanding the theoretical aspects of digital design is complemented by real-world application and experimentation.

In a future where technology continues to evolve at a breakneck pace, fostering a skilled workforce that can adapt and innovate is crucial. Tools and resources that demystify advanced technologies like FPGA are invaluable in this context. They not only empower individuals with the knowledge and skills to contribute to technological advancement but also ensure that the field of electronics remains vibrant, diverse, and forward-moving.

Empowering Innovators, One FPGA at a Time

The STEPFPGA-Based Electronics Education Bundle by EIM Technology represents a significant step forward in making FPGA technology accessible to all. It's a testament to the power of thoughtful educational resources in breaking down complex concepts and empowering the next generation of engineers and innovators. With such tools at their disposal, there's no limit to what aspiring digital designers can achieve.